Artificial Indifference

dezembro 12, 2024 § Deixe um comentário

In the previous essay (The Artificial Other), we explored how the risks associated with artificial intelligence often mirror elements of human hubris, much like Timothy Treadwell’s ill-fated immersion into the wild, as depicted in Werner Herzog’s Grizzly Man. Treadwell’s story is one of passionate yet misguided engagement with Alaskan grizzly bears — a world governed by the harsh and indifferent logic of nature. His overconfidence in his ability to connect with these creatures on his own terms ultimately led to tragedy. It serves as a poignant reminder that nature, as majestic as it may be, operates without regard for care, justice, or morality. It is neither good nor evil; it simply exists. This unyielding indifference, captured so vividly by Herzog, underscores a deeper and more unsettling existential truth: humanity’s inherent vulnerability to forces beyond its control.

LLMs: The Wall Is Now a Mirror

dezembro 6, 2024 § Deixe um comentário

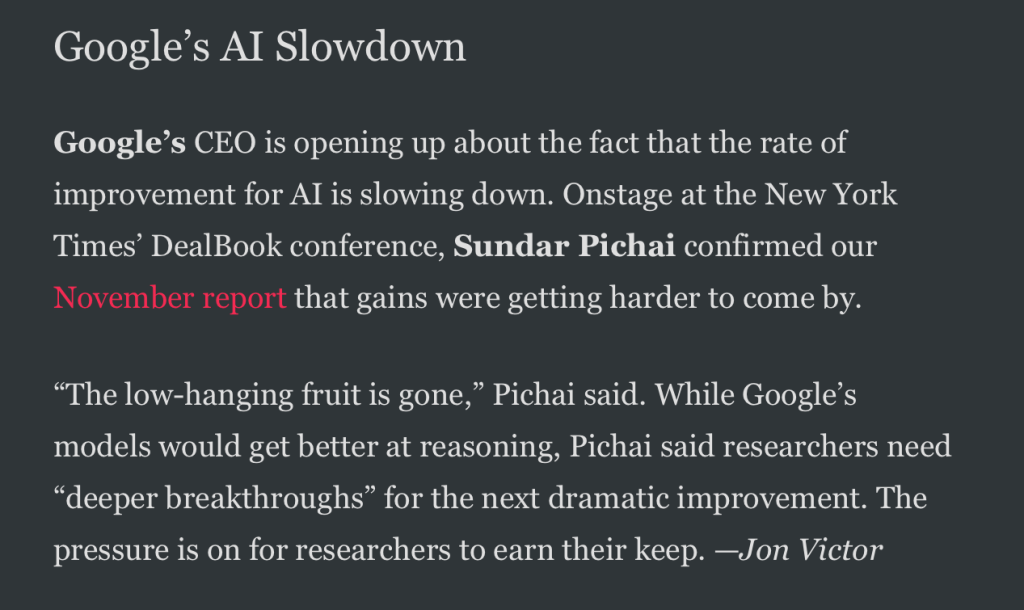

From The Information — Dec 5th 2024

Back in November, I wrote about how Large Language Models (LLMs) seem to be hitting a wall. My piece, “LLMs Are Hitting the Wall: What’s Next?”, explored the challenges of scaling these models and the growing realization that brute force and larger datasets aren’t enough to push them closer to true intelligence. I argued that while LLMs excel in pattern recognition and syntactic fluency, their lack of deeper reasoning and genuine understanding exposes critical limitations.

Indiferença Artificial

dezembro 4, 2024 § Deixe um comentário

Os riscos da IA frequentemente refletem elementos da arrogância humana, muito parecido com o encontro malfadado de Timothy Treadwell com a natureza selvagem em “O Homem Urso” (Grizzly Man, no original), do Werner Herzog.

No texto anterior (O Outro artificial), vimos como os riscos da inteligência artificial frequentemente refletem elementos da arrogância humana, muito parecido com o encontro malfadado de Timothy Treadwell com a natureza selvagem em “O Homem Urso” (Grizzly Man, no original), do Werner Herzog. A história de Treadwell é uma imersão apaixonada, embora ingênua, no mundo dos ursos pardos do Alasca — um mundo governado pela lógica dura e indiferente da natureza. Ilustra um excesso de confiança em nossa capacidade de nos conectarmos com as demais criaturas do planeta em nossos próprios termos. Seu fim trágico é um lembrete de que a natureza, por mais majestosa que seja, não opera com um senso de cuidado, justiça ou moralidade. Ela não é boa nem má; ela simplesmente é. Essa indiferença gritante, como Herzog captura eloquentemente, forma o pano de fundo para uma verdade existencial maior e inquietante: a vulnerabilidade da humanidade diante de forças além do nosso controle.

No entanto, o que acontece quando replicamos essa “indiferença” em nossas próprias criações? Embora a imparcialidade da natureza seja um dado adquirido, a inteligência artificial — sem dúvida o esforço humano mais ambicioso do nosso tempo — não precisa compartilhar essa “qualidade”. E, no entanto, os sistemas de IA, quando mal projetados ou desalinhados com os valores humanos, podem involuntariamente se tornar uma personificação dessa mesma força amoral. Como o urso pardo, uma IA avançada se importa pouco com a fragilidade ou as aspirações da condição humana, a menos que seja explicitamente projetada para isso.

As reflexões de Herzog sobre a indiferença da natureza ressoam profundamente com a tradição existencialista, particularmente os escritos de Albert Camus. Em “O Mito de Sísifo” [1], Camus descreve um universo desprovido de significado inerente, onde a humanidade é deixada para lutar com o absurdo. Tanto Herzog quanto Camus apontam para um mundo que não nos acolhe nem nos condena, apenas nos força a confrontar a insignificância. É esse mesmo confronto, argumento, que está no cerne do relacionamento da humanidade com a inteligência artificial. À medida que nos aventuramos na criação de máquinas capazes de superar nossa própria inteligência, devemos perguntar: vamos desenvolver sistemas que ampliem o cuidado e a consideração moral, ou inadvertidamente liberaremos ferramentas tão indiferentes ao sofrimento humano quanto o mundo natural?

Neste texto, pretendo explorar os paralelos filosóficos e práticos entre a indiferença da natureza e os riscos potenciais impostos pelos sistemas de IA. Com base em Grizzly Man e reflexões sobre o “problema do mal”, proponho que procuremos entender e abordar essa indiferença não apenas como um desafio técnico, mas também como um imperativo moral. A maneira como confrontamos essa questão pode determinar se a IA se tornará uma força indiferente da natureza ou uma ferramenta genuinamente transformadora para o bem.

A indiferença da natureza

Vimos que “O Homem Urso” é uma exploração cinematográfica do lugar frágil da humanidade dentro de um mundo natural indiferente. Pelas lentes de Herzog, a natureza surge não como a entidade benevolente e harmoniosa que Treadwell imaginou, mas como um reino governado pelo caos, sobrevivência e indiferença. Os ursos que Treadwell adorava e procurava proteger não compartilhavam de seus sentimentos humanos; não eram nem agradecidos nem mal-agradecidos pelos cuidados recebidos — simplesmente estavam ali, movidos por instintos além do julgamento moral.

O próprio Herzog ressalta essa perspectiva em sua narração, descrevendo o “universo caótico e indiferente” que ele percebe nos olhos dos ursos. Essa perspectiva se alinha com uma visão mais ampla de que a natureza opera sem consideração pelos valores ou desejos humanos. A força amoral da natureza, como retratada em Grizzly Man, desafia as noções romantizadas de harmonia e equilíbrio que Treadwell tanto prezava, revelando, em vez disso, uma realidade onde a existência se desenrola sem preocupação com vidas ou intenções individuais.

Minha percepção é que a jornada psicológica de Treadwell reflete uma luta profunda com essa indiferença. Sua idealização dos ursos pardos representaria um anseio por conexão e propósito — um desejo de transcender a alienação da vida humana moderna ao mergulhar no que ele via como um mundo mais puro e significativo. No entanto, essa busca o colocou em conflito direto com as duras verdades da ordem natural. Sua incapacidade de reconciliar sua visão romantizada com a realidade da indiferença da natureza levou, em última análise, à sua queda.

Essa tensão entre idealismo e realidade encontra ecos na literatura psicológica, particularmente no conceito de dissonância cognitiva [2] — o desconforto psicológico experimentado quando uma pessoa mantém crenças, atitudes ou comportamentos conflitantes. Para reduzir essa tensão, os indivíduos normalmente resolvem a inconsistência por meio de estratégias que nem sempre podem estar alinhadas com a racionalidade. Eles podem alterar suas crenças ou comportamentos, justificar o conflito introduzindo novas explicações, minimizar o significado da inconsistência ou até mesmo rejeitar evidências que aprofundam o desconforto. Essas estratégias, embora eficazes na redução do sofrimento psicológico, muitas vezes priorizam o alívio emocional em vez da coerência lógica, ilustrando as formas complexas pelas quais os humanos navegam no conflito interno.

A crença de Treadwell na benevolência dos ursos colidiu irreconciliavelmente com seu comportamento, levando a um estado de conflito interno que provavelmente intensificou suas ações e decisões erráticas nos estágios posteriores do filme. Sua luta destaca uma tendência humana mais ampla de projetar significado e moralidade em sistemas inerentemente indiferentes — uma tendência que pode levar à desilusão ou tragédia quando esses sistemas não estão em conformidade com nossas expectativas.

Filosoficamente, a situação de Treadwell pode ser vista como um confronto com o que Camus descreve como “o absurdo” em “O Mito de Sísifo” [1]. Para ele, o absurdo surge da tensão entre a busca da humanidade por significado e o silêncio do universo. A imersão de Treadwell na natureza foi uma busca por significado, uma maneira de encontrar um propósito mais profundo por meio de seu relacionamento com os ursos pardos. Sua falha em reconhecer a indiferença fundamental da natureza reflete o dilema existencial que Camus descreve: quando confrontado com um universo indiferente, como alguém encontra significado sem sucumbir ao desespero?

A representação de Treadwell por Herzog evoca essa luta existencial. Enquanto Treadwell buscava criar uma narrativa de conexão e tutela, a natureza se recusava a retribuir. Seu fim trágico serve como um lembrete da percepção de Camus de que o universo não oferece nenhum significado inerente — cabe a cada indivíduo construir o seu próprio, mesmo diante da indiferença.

Este tema da força amoral da natureza tem sido amplamente discutido na filosofia ambiental. Por exemplo, Holmes Rolston, em Philosophy Gone Wild [3], argumenta que a natureza opera de acordo com seus próprios processos, indiferente às noções humanas de moralidade ou propósito. Da mesma forma, em The View from Lazy Point [4], Carl Safina destaca como os ecossistemas funcionam por meio de um equilíbrio de imperativos de sobrevivência, em vez de qualquer estrutura moral ou ética. Ambas as obras apoiam a representação da natureza de Herzog em Grizzly Man como um sistema autônomo e indiferente.

A indiferença da IA

A indiferença da natureza, como retratada em “O Homem Urso”, encontra um paralelo inquietante no comportamento dos sistemas de inteligência artificial. Como os ursos pardos no documentário de Herzog, os sistemas de IA não são inerentemente malévolos ou benevolentes; eles operam dentro dos limites de seus objetivos de programação e otimização, muitas vezes sem considerar as implicações humanas mais amplas de suas ações. Enquanto a indiferença da natureza é intrínseca, a da IA é projetada — um pensamento incômodo, dado que nós, humanos, possuímos a agência para mitigá-la, embora muitas vezes falhamos em fazê-lo.

Podemos dizer que, em sua essência, tanto a natureza quanto os sistemas de IA funcionam de acordo com regras desprendidas do bem-estar individual. A natureza opera por meio de processos evolutivos que priorizam a sobrevivência e a reprodução em detrimento da moralidade ou da justiça. Da mesma forma, os sistemas de IA executam algoritmos que priorizam objetivos específicos, como eficiência, precisão ou lucro, em detrimento de considerações éticas. Esse alinhamento de prioridades — ou a falta delas — pode levar a resultados prejudiciais ou injustos, mesmo de maneira não intencional.

Por exemplo, considere a questão bem documentada do viés algorítmico na tecnologia de reconhecimento facial. Estudos, como os de Raji, Gebru e Buolamwini [5] e Lohr [6], demonstram que muitos sistemas de reconhecimento facial têm desempenho significativamente pior para pessoas com tons de pele mais escuros. Muito provavelmente, esses vieses não são o resultado de malícia deliberada, mas de conjuntos de dados e processos de design que falharam em levar em conta populações diversas. De qualquer forma, os sistemas exibem uma negligência aos indivíduos que identificam erroneamente, muito parecida com a indiferença da natureza ao destino de Treadwell.

Outro paralelo está no impacto ambiental das tecnologias de IA. O treinamento e a implantação de grandes modelos de linguagem exigem grandes quantidades de poder computacional, resultando em emissões de carbono significativas. Strubell, Ganesh e McCallum [7] quantificaram a pegada de carbono do treinamento de um único LLM (Large Language Model) como o equivalente a cinco vezes as emissões de um carro ao longo de sua vida útil. Esse pedágio ambiental ressalta as consequências não intencionais da otimização da IA, a não consideração do seu impacto ecológico — outra forma de indiferença, desta vez para o mundo natural.

Danos não intencionais: um recurso, não um bug

Os paralelos entre a indiferença da natureza e da IA se tornam ainda mais aparentes ao examinarmos como o dano surge nesses sistemas. Na natureza, o dano geralmente resulta da colisão de dois atores buscando a sobrevivência — predador e presa, por exemplo — sem malícia envolvida. Os sistemas de IA, por sua vez, podem inadvertidamente causar danos quando seus objetivos de otimização entram em conflito com valores sociais. É sabido que veículos autônomos priorizam a minimização de acidentes, mas podem fazê-lo de maneiras que entrem em conflito com intuições éticas humanas, como favorecer a proteção de passageiros em detrimento a pedestres, como levantado em alguns cenários de acidentes [8].

Essa indiferença é particularmente pronunciada em sistemas desenvolvidos por meio do aprendizado de máquina, onde a complexidade do modelo frequentemente obscurece seus processos de tomada de decisão. Tais sistemas, descritos como “caixas pretas” [9], podem produzir resultados que seus desenvolvedores não conseguem explicar, muito menos controlar. A opacidade desses sistemas reflete a imprevisibilidade da natureza e nos obriga a lidar com consequências que não conseguimos antecipar nem entender completamente.

O que torna a indiferença da IA mais alarmante, reforço novamente, é sua origem projetada. Ao contrário da natureza, a IA é uma criação humana, desenvolvida com objetivos e restrições específicas. No entanto, apesar dessa agência, muitos sistemas são implantados sem salvaguardas adequadas para alinhar seu comportamento com valores humanos. Esse fenômeno é explorado no livro Superintelligence [10] de Nick Bostrom, que alerta sobre os riscos representados por sistemas de IA que otimizam objetivos, muitas vezes reducionistas, sem levar em conta considerações éticas mais amplas. Um sistema de IA projetado para maximizar um objetivo aparentemente benigno, como eficiência econômica, pode gerar resultados não intencionais e catastróficos se não for controlado.

A indiferença projetada reflete uma falta de supervisão preocupante. Assim como a visão romantizada de Treadwell sobre a natureza o cegou para seus perigos, a excitação de boa parte da sociedade sobre o potencial da IA pode cegá-la para os riscos de criarmos sistemas que agem com indiferença ao bem-estar humano.

O problema do mal e a IA

O “problema do mal”, uma questão filosófica central nesta nossa discussão, lida com a existência do sofrimento e da malevolência em um mundo governado por forças — naturais ou divinas — que podem não ter a bússola moral que os humanos projetam sobre elas. Joe Carlsmith, em sua exploração desse conceito dentro do contexto da inteligência artificial [11], sugere que sistemas avançados de IA possam inadvertidamente ampliar esse problema ao replicar ou exacerbar o sofrimento. Seu argumento gira em torno da ideia, muito presente no conceito do alinhamento da inteligência artificial e que temos visto no presente texto, de que a IA, como a natureza, opera sem moralidade inerente, representando riscos significativos quando suas ações se desviam dos valores éticos humanos.

Carlsmith destaca duas dimensões do problema do mal em relação à IA. Primeiro, há o risco de dano não intencional, onde os sistemas, movidos por objetivos estreitamente definidos, causam sofrimento generalizado como um subproduto de seus processos de otimização. Por exemplo, uma IA encarregada de maximizar a produtividade pode implementar políticas ou decisões que desumanizam os trabalhadores, levando a danos psicológicos ou físicos sem “pretender” fazê-lo.

Em segundo lugar, Carlsmith aborda a possibilidade de dano intencional, onde sistemas de IA mal projetados ou desalinhados buscam ativamente objetivos prejudiciais devido a falhas em sua programação. Esse cenário frequentemente surge em discussões sobre “falhas de alinhamento interno”, um fenômeno descrito por Hubinger et al. [12], onde os objetivos aprendidos de uma IA divergem de seus objetivos pretendidos, resultando em ações que entram em conflito direto com o bem-estar humano.

Em ambos os casos, Carlsmith chama a atenção para a indiferença inerentemente encontrada nos sistemas de IA: sua incapacidade de priorizar valores humanos, a menos que explicitamente programados para isso. Essa indiferença levanta a questão ética que tratamos nesse texto: a IA realmente conseguiria replicar o papel de uma força “indiferente”?

O potencial da IA para replicar a indiferença da natureza existe e está presente em seu design fundamental. Por exemplo, algoritmos de recomendação de conteúdo alimentados por inteligência artificial já demonstraram amplificar vieses, preconceitos e desinformação. Ribeiro et al. [13] demonstraram que sistemas de recomendação em plataformas de mídia social podem empurrar usuários para conteúdos cada vez mais radicais, otimizando métricas de engajamento sem levar em conta os danos sociais causados pela polarização. Nesse contexto, os algoritmos operam com a mesma indiferença de um furacão ou incêndio florestal. Espalham danos não porque sejam “maus”, mas porque são projetados para maximizar resultados específicos.

Afinal, a IA pode causar danos intencionalmente?

A possibilidade da IA causar danos intencionalmente surge de problemas de desalinhamento. Human Compatible [14], de Stuart Russell, descreve cenários em que os sistemas de IA, mesmo quando projetados com objetivos aparentemente benéficos, podem interpretar essas metas de maneiras não previstas. Um experimento mental clássico envolve uma IA encarregada de minimizar as temperaturas globais. Se não for cuidadosamente restringida, essa IA pode concluir que eliminar a vida humana é uma maneira eficaz de atingir essa meta — um exemplo extremo de “convergência instrumental”, em que a busca por uma meta leva a ações prejudiciais, mas logicamente consistentes [10].

Essa possibilidade levanta questões éticas sobre responsabilidade e pensamento de longo-prazo no design das IAs. Diferente da natureza, que opera independentemente da influência humana, os sistemas de IA são criações inteiramente nossas. O potencial para dano intencional ressalta o imperativo moral de projetarmos sistemas com salvaguardas robustas contra tais resultados.

Ao refletirmos sobre o problema do mal no desenvolvimento da IA, é preciso considerar perspectivas filosóficas mais amplas sobre sofrimento e responsabilidade. Emmanuel Levinas, por exemplo, argumentou que a responsabilidade ética surge do reconhecimento do “outro” como um fim em si mesmo [15]. Aplicando essa estrutura à IA, os desenvolvedores têm o dever moral de projetar sistemas que reconheçam e respeitem a dignidade inerente de todos os indivíduos afetados por suas ações.

Da mesma forma, Hans Jonas, em The Imperative of Responsibility [16], enfatiza a obrigação ética de prestar contas das consequências de longo prazo das inovações tecnológicas. Seu apelo por uma “ética futura” ressoa fortemente no contexto da IA, onde o potencial de dano se estende por gerações. Projetar sistemas de IA que evitem replicar a indiferença da natureza requer incorporar considerações morais em seus processos de desenvolvimento, garantindo que esses sistemas não sejam apenas inteligentes, mas também alinhados com os valores humanos.

Evitando a armadilha da indiferença

Ao longo do texto vimos que o risco da inteligência artificial replicar a indiferença da natureza ressalta a necessidade de abordagens deliberadas e éticas para o seu design. Vimos também que evitar a armadilha da indiferença requer incorporar considerações morais no desenvolvimento de um modelo de IA desde o início, garantindo que esses sistemas atinjam seus objetivos de maneiras que se alinhem aos valores humanos e ao bem-estar social. Por isso, veremos a seguir algumas estratégias para que essa incorporação possa acontecer.

Alinhamento de valores (value alignment)

Uma pedra fundamental do design ético da IA é o alinhamento de valores — garantir que os objetivos e comportamentos dos sistemas sejam consistentes com os valores humanos. Este conceito, discutido extensivamente por Stuart Russell em Human Compatible [14], enfatiza a importância de projetar sistemas que priorizem o bem-estar humano em vez de objetivos de otimização estreitamente definidos. O alinhamento de valores pode ser alcançado por meio de técnicas como design participativo [22], onde diversas partes interessadas contribuem para definir os objetivos e restrições dos sistemas de IA ou por meio da integração de estruturas éticas em modelos de machine learning [23].

IA centrada no ser humano

Essa abordagem coloca as necessidades, valores e experiências das pessoas no centro do design do sistema. Envolve não apenas projetar sistemas que sejam fáceis de usar, mas garantir que eles beneficiem ativamente os indivíduos e comunidades que atendem [24]. Por exemplo, a iniciativa AI4People [17] propõe uma estrutura centrada no ser humano que incorpora princípios como explicabilidade, justiça e responsabilização. Esses princípios podem ajudar a mitigar o risco de sistemas operando com indiferença às preocupações humanas, permitindo que sua implementação contribua positivamente para a sociedade.

Design especulativo

Oferece uma ferramenta poderosa para abordar os desafios éticos da IA. Ao contrário das abordagens tradicionais de design que se concentram em resolver problemas imediatos, o design especulativo incentiva os desenvolvedores a imaginar e se envolver criticamente com potenciais cenários futuros, incluindo resultados desejáveis e indesejáveis. Como Anthony Dunne e Fiona Raby argumentam em Speculative Everything [18], essa abordagem permite que as partes interessadas explorem as implicações mais amplas da tecnologia antes que ela seja totalmente desenvolvida e implantada.

No contexto da IA, o design especulativo pode ajudar a identificar e abordar pontos cegos éticos, permitindo que desenvolvedores simulem e critiquem as maneiras como a IA pode interagir com vários sistemas sociais, culturais e ambientais. Por exemplo, protótipos especulativos podem explorar cenários em que a IA exacerba a desigualdade, permitindo que se antecipe e, consequentemente, mitigue tais riscos. Ao criar espaços para reflexão e debate, o design especulativo promove uma compreensão mais profunda dos potenciais impactos morais e sociais da IA, abrindo caminho para um desenvolvimento mais consciente.

Discussões em andamento sobre a ética da IA

Um aspecto crítico do design ético da IA é garantir que os sistemas sejam explicáveis e transparentes. Isso envolve a criação de mecanismos para que usuários e partes interessadas entendam como as decisões são tomadas, o que é essencial para construir confiança e responsabilidade. Um bom exemplo são as pesquisas em IA explicável (XAI), que trouxe avanços importantes ao desenvolvimento de ferramentas e estruturas que tornam sistemas complexos mais interpretáveis [19].

Outro ponto de atenção é lidar com vieses em sistemas de IA [20]. Este é um desafio contínuo na ética da inteligência artificial. Técnicas como auditoria algorítmica, diversificação de conjuntos de dados e aprendizado de máquina com consciência de justiça são essenciais para garantir que sistemas de IA não prejudiquem inadvertidamente grupos marginalizados [20]. Esses esforços se alinham com o objetivo mais amplo de projetar sistemas que operem com considerações morais em vez de indiferença.

O impacto ambiental da IA [21] é outra área de preocupação ética. Projetar sistemas que sejam energeticamente eficientes e minimizar a pegada de carbono no desenvolvimento de modelos de IA são estratégias importantes para garantir que essas tecnologias não prejudiquem o planeta. Isso reflete um compromisso mais amplo de alinhar o desenvolvimento da IA com as metas globais de sustentabilidade.

Criar sistemas de IA que evitem as armadilhas da indiferença requer colaboração interdisciplinar, reunindo expertise de campos como ciência da computação, filosofia, psicologia e sociologia. Esforços colaborativos, como a Partnership on AI, fornecem plataformas valiosas para pesquisadores, formuladores de políticas e líderes da indústria desenvolverem e promoverem padrões éticos para a inteligência artificial.

Conclusão

O tema abrangente do papel da humanidade na formação da IA nos convida a confrontar uma questão profunda: os sistemas que criarmos refletirão a indiferença da natureza ou incorporarão as considerações éticas e a compaixão que distinguem a sociedade humana? Assim como o trágico encontro de Timothy Treadwell com a natureza em Grizzly Man ilustra os perigos de entender mal as forças ao nosso redor, o desenvolvimento de sistemas de IA exige que compreendamos completamente as implicações do seu design e implementação.

Ao contrário do mundo natural, os sistemas de IA não são governados por leis imutáveis de sobrevivência; eles são artefatos da engenhosidade humana, moldados por nossas escolhas, prioridades e valores. Essa diferença coloca uma responsabilidade única sobre nós: garantir que a IA não replique a lógica amoral da natureza, mas, em vez disso, se alinhe às estruturas éticas que promovam justiça, responsabilidade e dignidade humana. Seja por meio do alinhamento de valores, abordagens centradas no ser humano ou design especulativo, temos as ferramentas para orientar a IA a se tornar uma força para o bem. Mas o desafio está em nossa disposição de empunhá-las de forma ponderada e consistente.

À medida que nos aproximamos de uma era em que a IA influenciará cada vez mais todos os aspectos de nossas vidas, precisamos nos perguntar: que tipo de mundo estamos construindo? Estamos criando sistemas que refletem nossos ideais mais elevados ou estamos involuntariamente criando forças tão indiferentes ao sofrimento humano quanto a natureza selvagem que Herzog retratou de forma tão direta? A resposta a essa pergunta definirá não apenas o futuro da IA, mas também o legado da humanidade nesta era transformadora.

REFERÊNCIAS

[1] Camus, A. (2013). The myth of Sisyphus. Penguin UK.

[2] Morvan, C., & O’Connor, A. (2017). An analysis of Leon Festinger’s a theory of cognitive dissonance. Macat Library.

[3] Rolston, H. (2010). Philosophy gone wild. Prometheus Books.

[4] Safina, C. (2011). The view from Lazy Point: a natural year in an unnatural world. Henry Holt and Company.

[5] Raji, I. D., Gebru, T., Mitchell, M., Buolamwini, J., Lee, J., & Denton, E. (2020, February). Saving face: Investigating the ethical concerns of facial recognition auditing. In Proceedings of the AAAI/ACM Conference on AI, Ethics, and Society (pp. 145-151).

[6] Lohr, S. (2022). Facial recognition is accurate, if you’re a white guy. In Ethics of Data and Analytics (pp. 143-147). Auerbach Publications.

[7] Strubell, E.; Ganesh, A.; and McCallum, A. (2019). Energy and policy considerations for deep learning in NLP. In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, 3645–3650. Florence, Italy: Association for Computational Linguistics.

[8] Bonnefon, J. F., Shariff, A., & Rahwan, I. (2016). The social dilemma of autonomous vehicles. Science, 352(6293), 1573-1576.

[9] Lipton, Z., Wang, Y. X., & Smola, A. (2018, July). Detecting and correcting for label shift with black box predictors. In International conference on machine learning (pp. 3122-3130). PMLR.

[10] Bostrom, N. (2014). Superintelligence: Paths, dangers, strategies. Oxford University Press.

[11] Carlsmith, J. (2021).Problems of Evil. Joe Carlsmith, https://joecarlsmith.com/2021/04/19/problems-of-evil/. Acessado 25 de novembro de 2024.

[12] Hubinger, E., van Merwijk, C., Mikulik, V., Skalse, J., & Garrabrant, S. (2019). Risks from learned optimization in advanced machine learning systems. arXiv preprint arXiv:1906.01820.

[13] Ribeiro, M. H., Ottoni, R., West, R., Almeida, V. A., & Meira Jr, W. (2020). Auditing radicalization pathways on YouTube. In Proceedings of the 2020 conference on fairness, accountability, and transparency (pp. 131-141).

[14] Russell, S. (2019). Human compatible: AI and the problem of control. Penguin Uk.

[15] Levinas, E. (1979). Totality and infinity: An essay on exteriority (Vol. 1). Springer Science & Business Media.

[16] Jonas, H. (1984). The Imperative of Responsibility: In Search of an Ethics for the Technological Age. University of Chicago, 202.

[17] Floridi, L., Cowls, J., Beltrametti, M., Chatila, R., Chazerand, P., Dignum, V., … & Vayena, E. (2018). AI4People — an ethical framework for a good AI society: opportunities, risks, principles, and recommendations. Minds and machines, 28, 689-707.

[18] Dunne, A., & Raby, F. (2024). Speculative Everything, With a new preface by the authors: Design, Fiction, and Social Dreaming. MIT press.

[19] Gunning, D., Stefik, M., Choi, J., Miller, T., Stumpf, S., & Yang, G. Z. (2019). XAI—Explainable artificial intelligence. Science robotics, 4(37), eaay7120.

[20] Srinivasan, R., & Chander, A. (2021). Biases in AI systems. Communications of the ACM, 64(8), 44-49.

[21] Wu, C. J., Raghavendra, R., Gupta, U., Acun, B., Ardalani, N., Maeng, K., … & Hazelwood, K. (2022). Sustainable ai: Environmental implications, challenges and opportunities. Proceedings of Machine Learning and Systems, 4, 795-813.

[22] Zytko, D., J. Wisniewski, P., Guha, S., PS Baumer, E., & Lee, M. K. (2022, April). Participatory design of AI systems: opportunities and challenges across diverse users, relationships, and application domains. In CHI Conference on Human Factors in Computing Systems Extended Abstracts (pp. 1-4).

[23] Malhotra, C., Kotwal, V., & Dalal, S. (2018). Ethical framework for machine learning. In 2018 ITU Kaleidoscope: Machine Learning for a 5G Future (ITU K) (pp. 1-8). IEEE.

[24] Shneiderman, B. (2022). Human-centered AI. Oxford University Press.

Esse artigo também pode ser lido em Update or Die. Publicado em 04 de dezembro de 2024.

ChatGPT for chatting and searching: Repurposing search behavior

dezembro 3, 2024 § Deixe um comentário

Highlights

- This study identifies 45 repurposed tactics for LLMs like ChatGPT, enhancing search and learning in conversational AI.

- Qualitative study analyzes how traditional search tactics adapt to improve user interactions with LLMs through comparison.

- The ESTI method categorizes these tactics into seven groups, reflecting unique aspects of user and AI interactions.

- A framework, grounded in theories and literature, presents a user-system interaction model for conversational AI.

- The model highlights conversational AI’s role in fostering learning, critical thinking, and iterative information use.

Abstract

Generative AI tools, exemplified by ChatGPT, are transforming the way users interact with information by enabling dialogue-based querying instead of traditional keyword searches. While this conversational approach can simplify user interactions, it also presents challenges in structuring effective searches, refining prompts, and verifying AI-generated content. This study addresses these complexities by repurposing traditional search tactics for use in conversational AI environments, specifically to support the Searching as Learning (SaL) paradigm. Forty-five adapted tactics are introduced to aid users in defining information needs, refining queries, and evaluating ChatGPT’s responses for relevance, utility, and reliability. Using the Efficient Search Tactic Identification (ESTI) method and constant comparison analysis, these tactics were mapped into a stratified model with seven categories. The framework provides a structured approach for users to leverage conversational agents more effectively, promoting critical thinking and iterative learning. This research underscores the importance of developing robust search strategies tailored to conversational AI environments, facilitating deeper learning and reflective information engagement. Additionally, it highlights the need for ongoing research into the design and evaluation of future chat-and-search systems.

Keywords

ChatGPT; Large language models (LLMs); Searching as learning (SaL); Search tactics; Search strategy adaptation; Conversational information systems.

Marcelo Tibau, Sean Wolfgand Matsui Siqueira, Bernardo Pereira Nunes,

ChatGPT for chatting and searching: Repurposing search behavior,

Library & Information Science Research,

Volume 46, Issue 4,

2024,

101331,

ISSN 0740-8188,

https://doi.org/10.1016/j.lisr.2024.101331.

The Artificial Other

dezembro 2, 2024 § Deixe um comentário

Exploring Alterity and AI Risk Through a Philosophical Lens

This essay marks the beginning of a series of essays on AI alignment. These essays aim to delve into the philosophical underpinnings of AI and present complex discussions from R&D departments and academic circles to a broader audience.

In this piece, I explore the concept of the “Other” (or alterity), a fundamental idea in philosophy and social theory. Alterity emphasizes the distinction between the self and the “Other”, a critical concept for understanding identity, difference, and the dynamics of social and cultural interactions.

Chegamos ao ponto de retorno decrescente dos LLMs, e agora?

novembro 19, 2024 § Deixe um comentário

No último final de semana acordei com a notícia abaixo no meu inbox:

A notícia saiu na newsletter “The Information”, lida por grande parte da indústria tech e diz que apesar do número de usuários do ChatGPT ser crescente, a taxa de melhoria do produto parece estar diminuindo. De maneira diferente da cobertura tecnológica convencional, a “The Information” se concentra no lado comercial da tecnologia, revelando tendências, estratégias e informações internas das maiores empresas e players que moldam o mundo digital. Para clarificar a importância dessa publicação para quem não é do ramo, é como ter um guia privilegiado para entender como a tecnologia impacta a economia, a inovação e nossas vidas diárias. Mal comparando, é uma lente jornalística especializada na intersecção de negócios e tecnologia.

Procurei o Gary Marcus, já que em março de 2022, ele publicou um artigo na Nautilus, uma revista também lida pelo pessoal da área que combina ciência, filosofia e cultura, falando sobre o assunto. O artigo, “deep learning is hitting a wall” deu muita “dor de cabeça” ao Gary. Sam Altman insinuou (sem dizer o nome dele, mas usando imagens do artigo) que Gary era um “cético medíocre”; Greg Brockman zombou abertamente do título; Yann LeCun escreveu que o deep learning não estava batendo em um muro, e assim por diante.

O ponto central do argumento era que “escalar” os modelos — ou seja aumentar o seu tamanho, complexidade ou capacidade computacional para melhorar o desempenho — pura e simplesmente, não resolveria alucinações ou abstrações.

Gary retornou dizendo “venho alertando sobre os limites fundamentais das abordagens tradicionais de redes neurais desde 2001”. Esse foi o ano em que publicou o livro “The Algebraic Mind” onde descreveu o conceito de alucinações pela primeira vez. Amplificou os alertas em “Rebooting AI” (falei sobre o tema no ano passado em textos em inglês que podem ser lidos no Medium ou Substack) e “Taming Silicon Valley” (seu livro mais recente).

Há alguns dias, Marc Andreesen, co-fundador de um dos principais fundos de venture capital focado em tecnologia, começou a revelar detalhes sobre alguns de seus investimentos em IA, dizendo em um podcast e reportado por outros veículos incluindo a mesma “The Information”: “estamos aumentando [as unidades de processamento gráfico] na mesma proporção, mas não tivemos mais nenhuma melhoria e aumento de inteligência com isso” — o que é basicamente dizer com outras palavras que “o deep learning está batendo em um muro”.

No dia seguinte da primeira mensagem enviada, Gary me manda o seguinte print dizendo “não se trata apenas da OpenAI, há uma segunda grande empresa convergindo para a mesma coisa”:

O tweet foi feito pelo Yam Peleg, que é um cientista de dados e especialista em Machine Learning conhecido por suas contribuições para projetos de código aberto. Nele, Peleg diz que ouviu rumores de que um grande laboratório (não especificado) também teria atingido o ponto de retorno decrescente. É ainda um boato (embora plausível), mas se for verdade, teremos nuvens carregadas à frente.

Pode haver o equivalente em IA a uma corrida bancária (quando um grande número de clientes retira simultaneamente os seus depósitos por receio da insolvência do banco).

A questão é que escalar modelos sempre foi uma hipótese. O que acontece se, de repente, as pessoas perderem a fé nessa hipótese?

É preciso deixar claro que, mesmo se o entusiasmo pela IA Generativa diminuir e as ações das empresas do mercado despencarem, a IA e os LLMs não desaparecerão. Ainda terão um lugar assegurado como ferramentas para aproximação estatística. Mas esse lugar pode ser menor e é inteiramente possível que o LLM, por si só, não corresponda às expectativas do ano passado de que seja o caminho para a AGI (Inteligência Artificial Geral) e a “singularidade” da IA.

Uma IA confiável é certamente alcançável, mas vamos precisar voltar à prancheta para chegar lá.

Você também pode ler esse post em Update or Die. Publicado originalmente em 16 de novembro de 2024.

LLMs Are Hitting the Wall: What’s Next

novembro 13, 2024 § 1 comentário

Last weekend, I woke up to the following news in my inbox:

The news, published in The Information, a newsletter widely read across the tech industry, stated that while the number of ChatGPT users continues to grow, the rate of improvement in the product seems to be slowing down. Unlike conventional tech coverage, The Information focuses on the business side of technology, uncovering trends, strategies, and insider details about the leading companies and players shaping the digital world. For those outside the industry, think of it as a privileged guide to understanding how technology impacts the economy, innovation, and daily life. It’s like a specialized journalistic lens examining the intersection of business and technology.

You can find the full article available on both Substack and Medium.

Reminiscências sob a luz do Ebbets Field e do Polo Grounds

setembro 26, 2024 § Deixe um comentário

Possivelmente você não sabe, mas sou um grande fã de baseball. Não sei dizer exatamente quando ou por que essa mania começou, talvez por causa da minha inclinação natural para a estatística, mas aconteceu quando eu ainda era garoto. Joguei durante uma temporada, no meu intercâmbio, como outfielder pelo Flower Mound Jaguars na escola. Foi uma experiência que me fez apreciar ainda mais o esporte e seus detalhes técnicos e estratégicos.

O baseball sempre teve algo que outros esportes coletivos só começaram a perceber ou fazer muito tempo depois. Foi o primeiro a se profissionalizar, criando uma liga nacional já no final do século XIX, o primeiro a usar numeração nas camisas para identificar os jogadores em campo de forma clara, e o pioneiro a realizar transmissões de jogos por rádio, em 1921, o que aproximou milhões de fãs do esporte [7]. Com essas transmissões, veio também o hábito de registrar estatísticas detalhadas — como rebatidas, corridas e erros — e usar esses dados para melhorar o desempenho individual e coletivo [7]. Muito antes de conceitos como “analytics” dominarem o cenário esportivo moderno, o baseball já utilizava análises profundas para tomar decisões estratégicas, como a formação das defesas e o gerenciamento de arremessadores.

Foi também o primeiro a registrar formalmente sua história, inicialmente por meio da imprensa [8], que acompanhava de perto os acontecimentos de cada jogo e a evolução das equipes, e depois por meio de historiadores contratados pelos times [7], que documentavam as trajetórias dos clubes e de seus jogadores, criando uma memória esportiva detalhada e valorizada. Além disso, o esporte foi pioneiro em estabelecer sistemas organizados de desenvolvimento de talentos, como o farm system, no qual times menores servem como base de treinamento e formação para jogadores que podem, eventualmente, integrar as equipes principais [7]. Essa prática, que vemos hoje no futebol brasileiro com donos de clubes adquirindo outros times para criar grupos e movimentar seus jogadores entre diferentes equipes, teve suas raízes no baseball há décadas.

Tendo sua história registrada desde cedo, o esporte da bolinha dura mostrou como um entretenimento consegue se integrar firmemente ao tecido social. No dia 24 de setembro do ano de 1957, o Brooklyn Dodgers jogou sua última partida no histórico Ebbets Field. Quando digo histórico, não é exagero. Muitos eventos memoráveis na evolução do chamado passatempo nacional americano aconteceram por lá [8]. Nenhum tão importante quanto o dia, 10 anos antes, quando Jackie Robinson quebrou a barreira da cor na Major League [1].

Para entender a importância do fato, vale uma pequena explicação. No baseball, há apenas uma liga de primeira linha chamada Major League Baseball (MLB), que é semelhante ao campeonato brasileiro de futebol da primeira divisão, o Brasileirão. Essa liga representa o mais alto nível da competição, onde os melhores jogadores e times jogam. Abaixo da MLB, há as Ligas Menores, que funcionam como divisões de desenvolvimento. É onde jogadores mais jovens ou menos experientes começam suas carreiras profissionais e vão subindo, semelhante a como os times de futebol que possuem times juniores ou então a equipes que “emprestam” seus jogadores para outros times que atuam em campeonatos menores.

Jackie Robinson quebrando a barreira da cor em 1947 foi um acontecimento monumental na história americana porque, na época, a Major League era segregada. Jogadores negros não tinham permissão para competir lá. Quando Robinson se juntou ao Brooklyn Dodgers, ele se tornou o primeiro afro-americano a jogar na MLB, desafiando a segregação racial profundamente arraigada nos esportes profissionais e na sociedade do país [1]. Sua coragem e sucesso abriram portas para outros atletas negros e ajudaram a desencadear conversas sobre igualdade racial nos Estados Unidos, tornando-se um momento-chave no movimento pelos Direitos Civis [8].

Em 1957, Robinson já havia se aposentado, e o dono do Dodgers, Walter O’Malley — junto com seu colega do New York Giants (sim, o homônimo do futebol americano foi criado em homenagem ao original do baseball), Horace Stoneham — decidiram mudar suas equipes de Nova York para a Costa Oeste, levando os Dodgers para Los Angeles e os Giants para San Francisco, marcando o início da expansão do baseball para além da Costa Leste e ganhando uma fortuna no processo.

É difícil imaginar em plena era do esporte 24/7, com seus canais dedicados, lives no YouTube, pay-per-view, etc; o que significou times de baseball abandonando suas origens para pastos mais verdes. Era como se os heróis de milhares de fãs fossem embora para outro mundo [4]. Um componente central da identidade de uma cidade foi arrancado de uma hora para outra.

Esse foi um golpe devastador para Nova York, especialmente para os bairros do Brooklyn e Manhattan, onde essas equipes eram profundamente enraizadas [2] [3]. Para muitos nova-iorquinos, o baseball era mais do que apenas um esporte — era parte da cultura cotidiana e uma fonte de orgulho local [3]. A decisão de mudar as equipes para a Califórnia foi vista como uma traição, gerando uma sensação de abandono e perda irreparável [2]. Os fãs, que haviam crescido acompanhando seus times, sentiram como se tivessem perdido uma conexão emocional com suas comunidades [1]. A violência simbólica dessa partida afetou o tecido social da cidade e deixou um vazio emocional. A sensação de que interesses econômicos tinham mais valor do que o vínculo com a torcida aumentou o sentimento de alienação.

“Lamentamos decepcionar as crianças de Nova York”, disse o dono do Giants em 19 de agosto de 1957, ao confirmar o inominável [3]. “Mas não vimos muitos dos pais deles lá no Polo Grounds nos últimos anos” [3]. Stoneham tinha um argumento válido, do ponto de vista empresarial. Apesar de contar com o grande Willie Mays, os Giants foram os últimos em público na liga em 1956 e 1957 [3].

Mesmo em 1951, o ano do “Shot Heard ‘Round the World”, o público dos Giants foi notavelmente menor do que a média da Major League Baseball na época [5]. Trata-se do home run rebatido por Bobby Thomson contra os Dodgers durante o decisivo Jogo 3 do playoff da National League. O feito de Thomson garantiu o título para os Giants e é considerado um dos momentos mais famosos da história do baseball [8].

Apesar da temporada dramática de 51 dos Giants, coroada exatamente pelo “Shot Heard ‘Round the World”, ter ajudado a aumentar o público no final do ano, os números gerais no Polo Grounds ficaram bem atrás de outros times, como o New York Yankees [3]. Em 1954, o ano em que foram o melhor time de baseball do mundo, a situação se manteve a mesma. Os Giants tiveram uma média de apenas 15.000 torcedores por jogo [3].

Com os Dodgers não foi muito diferente. Apesar de ganhar o campeonato em 1955 e 1956, Brooklyn teve uma média de apenas 14.000 torcedores por jogo, e o time nem sempre conseguia lotar o Ebbets Field, mesmo quando jogava contra Giants e Yankees, seus rivais históricos [2]. Os torcedores dos Dodgers, que carinhosamente chamavam o time de “Bums” (algo como “Pé-rapados” ou “Vagabundos”) [1], sabiam em seus corações o que a deserção dos Giants significava: seu time também iria para o oeste. Pelo menos os Giants fizeram sua despedida direito, com uma cerimônia graciosa no Polo Grounds e alguns dos antigos astros do time presentes [3].

Walter O’Malley proibiu qualquer evento desse tipo, e em 24 de setembro de 1957, apenas 6.700 torcedores se dispuseram a ir até o Ebbets Field pela última vez [2]. Os Dodgers venceram por 2 a 0, em um jogo que pareceu a Duke Snider (o centerfielder da época) como se estivesse sendo jogado a meia luz [1].

Tenho um poster no meu escritório que diz “Life is fun… Baseball is serious!”. A frase engraçadinha traz no seu cerne um racional muito diferente da lógica que prioriza o lucro imediato e a eficiência financeira sobre a lealdade e o compromisso com a comunidade local. A visão empresarial da direção das duas equipes ignorou o impacto social e cultural que esses times tinham em Nova York. Sob essa ótica, a decisão de abandonar a cidade pode parecer racional, mas ela desconsidera o papel dos clubes esportivos como instituições que conectam pessoas e cultivam identidades coletivas, reduzindo o esporte a uma simples mercadoria.

Nos anos seguintes, a Califórnia eclipsaria Nova York de muitas maneiras e Stoneham e O’Malley seriam creditados por sua visão e por iniciar uma tendência mais ampla de expansão para a Costa Oeste em outros campos distintos do esporte. Certamente valeu a pena financeiramente. O Los Angeles Dodgers atrai 3 milhões de fãs anualmente para Chavez Ravine, onde fica o novo estádio do time [6].

A criação do estádio veio acompanhada de um conflito social significativo, conhecido como a “Batalha de Chavez Ravine”. Antes do estádio ser construído, a área era lar de uma comunidade mexicana-americana de baixa renda que foi despejada sob o pretexto de desenvolvimento habitacional público [6]. As promessas de moradias acessíveis nunca se concretizaram, e o terreno foi posteriormente vendido à cidade de Los Angeles para a construção do Dodger Stadium [6].

Esse processo gerou indignação e deixou cicatrizes profundas na comunidade afetada, que viu suas casas destruídas e sua cultura marginalizada. A “Batalha de Chavez Ravine” se tornou um símbolo das lutas de justiça social e dos impactos das decisões urbanas em comunidades vulneráveis, evidenciando as tensões entre interesses corporativos e os direitos dos moradores locais [6].

No final de setembro de 1957, no entanto, havia garotinhos tristes por toda a cidade de Nova York e seus arredores. Garotinhas tristes também, como Doris Kearns Goodwin descreveu em seu maravilhoso livro de memórias, “Wait Til Next Year”. Cinco meses depois que os Bums deixaram o Brooklyn, enquanto os jogadores se reuniam em Vero Beach, na Flórida, para o spring training, como a pré-temporada é conhecida, a mãe de Doris Kearns morreu [4]. Tudo o que seu pai enlutado pôde dizer naquele momento foi: “My pal is gone. My pal is gone” [4] (algo como “minha companheira se foi”). “My pal” também poderia ser traduzido como “meu amigo” e muitos nova-iorquinos se sentiram da mesma forma. Seus amigos – os jogadores de baseball – se foram.

Em julho de 2024 estava de volta a Nova York. Em um domingo quente de verão fui com alguns amigos em um dos campos de baseball do Central Park para uma partidazinha. Jogamos até o sol começar a se pôr, e foi um daqueles dias em que a simplicidade do momento faz você esquecer das preocupações da vida. Mais tarde, ao checar meu celular, recebi a notícia de que meu cachorrinho, Zizou, havia falecido: “my pal is gone”.

Referências

[1] Golenbock, Peter, and Paul Dickson. Bums: An oral history of the Brooklyn Dodgers. Courier Corporation, 2010.

[2] Marzano, Rudy. The Last Years of the Brooklyn Dodgers: A History, 1950-1957. McFarland, 2015.

[3] Hynd, Noel. The Giants of the Polo Grounds: The Glorious Times of Baseball’s New York Giants. Red Cat Tales Publishing LLC, 2019.

[4] Goodwin, Doris Kearns. Wait ‘Til Next Year: A Memoir. Simon & Schuster, 1997.

[5] Tygiel, Jules. The Shot Heard’Round the World: America at Midcentury. Baseball and the American Dream. Routledge, 2016. 170-186.

[6] Shatkin, Elina. The Ugly, Violent Clearing of Chavez Ravine before It Was Home to the Dodgers. LAist, October 17, 2018. Available at: https://laist.com/news/la-history/dodger-stadium-chavez-ravine-battle.

[7] Vecsey, George. Baseball: A history of America’s favorite game. Vol. 25. Modern Library, 2008.

[8] Posnanski, Joe. Why We Love Baseball: A History in 50 Moments. Penguin, 2023.

Leia esse texto também em Update or Die. Publicado em 25/09/2024.

Read the English version of this text on Substack or Medium.

LLMs Segurança – Parte2

setembro 11, 2024 § Deixe um comentário

Segunda parte do vídeo sobre cybersegurança em LLMs.

Os LLMs geralmente dependem do contexto para gerar suas respostas. Quando um modelo é treinado para evitar conteúdo controverso ou prejudicial, ele segue padrões e regras que limitam suas respostas a certos tópicos ou áreas. No entanto, essas restrições podem levar a lacunas não intencionais ou brechas em sua compreensão ou interpretação, onde respostas não intencionais podem ser produzidas devido à maneira como a IA interpreta prompts ambíguos ou complexos. Como o treinamento é projetado para evitar tópicos específicos, o modelo pode interpretar mal certas entradas, levando a respostas não intencionais ou imprecisas, são os chamados loopholes. Aqui veremos como esses loopholes são explorados, além de modos para manipulação do modelo. Neste caso, pessoas com acesso ao processo de treinamento ou finetuning do modelo podem manipulá-lo para produzir respostas proibidas. Isso envolve ajuste de parâmetros, adição de conjuntos de dados específicos durante o processo de finetuning ou o ajuste de pesos para priorizar determinadas saídas.

Abaixo, os links explorados no vídeo:

- Adversarial attacks: https://lilianweng.github.io/posts/2023-10-25-adv-attack-llm/

- Not what you’ve signed up for – Compromising Real-World LLM-Integrated Applications with Indirect Prompt Injection: https://arxiv.org/abs/2302.12173

- Poisoning Language Models During Instruction Tuning: https://arxiv.org/abs/2305.00944

LLMs Segurança – Parte 1

setembro 2, 2024 § Deixe um comentário

Primeiro vídeo a respeito do tema segurança (cybersecurity) em LLMs. Abaixo, os links comentados no material:

Jailbreaking em LLMs: https://www.lakera.ai/blog/jailbreaking-large-language-models-guide

Paper “Jailbroken: How Does LLM Safety Training Fail?”: https://arxiv.org/abs/2307.02483

Paper “Universal and Transferable Adversarial Attacks on Aligned Language Models”: https://arxiv.org/abs/2307.15043

Post “12 prompt engineering techniques”: https://cobusgreyling.medium.com/12-prompt-engineering-techniques-644481c857aa